In this article, we are going to discuss about implementing Euclidean distance in PostgreSQL database. Before getting into actual implementation, let me give you a quick background to understand the need for writing this article. I have been working on Face Authentication system and to perform Face Verification task, we need to compute the distance between two faces. There are a lot of implementations out there to achieve this using Python, however it did not help in my case, so I have implemented the Euclidean distance computation in PostgreSQL. Let's see about the challenges and solutions in detail.

Face Recognition System:

- Face Registration

- Face Verification

Face Registration:

This part contains below steps:

- Users will register their faces with name and image

- The registered photos will be stored in folder with name as folder name

- The user images will be feed to Convolutional Neural Network (CNN) model and extract 128 measurements for each image.

- Using a K-Nearest Neighbour classifier to train a classifier with name and their corresponding 128 dimension encodings.

- Save the classifier as pickle file on application directory.

Face Verification:

This part contains below steps:

- When a new user try to login with their image, it will be feed to same Convolutional Neural Network (CNN) and extract 128 dimension number vector.

- This 128 dimension vector is passed to classifier and it will be compared against all the pre-trained face encodings.

- The classifier will return name of the encodings where distance comparison value is less than 0.3 (as I defined threshold as 0.3)

The Challenges:

- Adding new users to system is complicated as it requires training for every users.

- Each time we add new user, need to train all the existing registered user images, generating 128 dimension number vector for all the images during training is time & resource consuming process, it requires higher GPU processing and doing this for each new users is not the right way.

- Deleting existing user is complicated as it deals with classifier object as pickle file.

- Parallelising to multiple servers with this approach is not feasible, as classifier stored as pickle file, we need to keep moving this file to all the servers, every-time we make changes to training data.

- Cannot add multiple images for a person as it increases the computation.

The Solution:

After dealing with these issues for sometime, I have changed my approach to overcome above mentioned challenges. The current implementation works as follows:

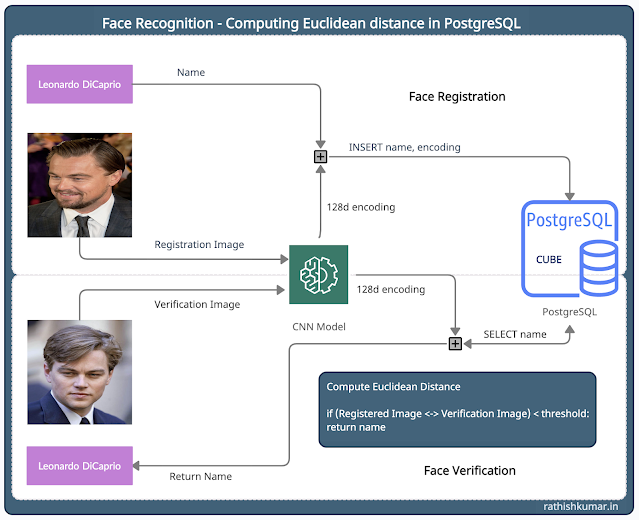

|

| Face Recognition - Computing Euclidean distance in PostgreSQL |

Face Registration:

- User registers with name and image

- Extract the 128 dimension number vector by feeding into Convolutional Neural Network (CNN) model with pre-trained weights.

- Store name and encoding in PostgreSQL database (you can store it in any database, I will explain the reason for storing it in PostgreSQL later in the post, keep reading)

Face Verification:

- User login with Image

- Extract the 128 dimension number vector by feeding into Convolutional Neural Network (CNN) model with pre-trained weights.

- Compute the Euclidean distance between the new user encoding with existing encodings stored in PostgreSQL using SQL statement.

- Authenticate the user based on the distance value (true, if d < 03)

So how this solution helps?

- We removed training part completely, as adding a new user is now INSERT operation.

- We can add any number of images per user as it's going to be inserted as new records in a table.

- We can remove/delete/update individual user records without additional overhead with DELETE & UPDATE operation.

- Computations in PostgreSQL is faster as compared to Python implementation.

- We parallelise and scale this architecture to any number of users in future - currently I am performing inference with 25K encodings, that is 1:25K (this is not a big thing in database!!!)

Why PostgreSQL?

By now, you will be wondering why PostgreSQL specifically, as this can be done in any SQL database. Let me give you my reasons for going with PostgreSQL.

- CUBE datatype - yes I can store entire 128 dimension number vector in a single column (this is really awesome 😎 )

- In-built function for Euclidean distance computation (<->)

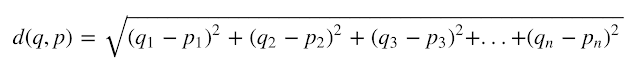

If you are trying to do this in any other database, please mention the query in comment section, it will be helpful to others. Formula for computing euclidean distance is given below:

Euclidean Distance:

|

| Euclidean distance for higher dimension |

Refer this wikipedia page for more details. Okay, let's jump into implementation.

Installing PostgreSQL from source:

- Download the latest PostgreSQL source code files from here: https://www.postgresql.org/ftp/source/

- The version, I am installing is postgresql-13.2.tar.bz2 (March, 2021) - you can install the latest version.

- Unzip the source code to local directory: tar -xvjf postgresql-13.2.tar.bz2

- Edit the cubedata.h file located at /contrib/cube/cubedata.h: vi /contrib/cube/cubedata.h

- Change the CUBE_MAX_DIM to value to 128 from 100, after changing, it looks like: #define CUBE_MAX_DIM (128)

- Run the below command in order - this is short version of installation from source code, if you are looking for detailed steps, visit official documentation: https://www.postgresql.org/docs/12/install-requirements.html

./configure

make

su

make install

adduser postgres

mkdir /usr/local/pgsql/data

chown postgres /usr/local/pgsql/data

su - postgres

/usr/local/pgsql/bin/initdb -D /usr/local/pgsql/data

/usr/local/pgsql/bin/pg_ctl -D /usr/local/pgsql/data -l

\logfile start

/usr/local/pgsql/bin/createdb test

/usr/local/pgsql/bin/psql testGo to the contrib/cube directory and install the extension by running the below commands:

make

make install

Lets create a database and user for our Face Recognition system:

su - postgres

/usr/local/pgsql/bin/createdb facedb

/usr/local/pgsql/bin/psql facedb

CREATE USER face WITH PASSWORD = ‘face’;

GRANT ALL PRIVILEGES ON DATABASE facedb TO face;To use CUBE extension from facedb we need to create the extension under this database, to do that, run the following SQL statement:

CREATE EXTENSION cube;Create a table for storing Employee ID, Name, Encoding - you can skip the employeeid column - I am adding it to get Employee ID in addition with Name at the Face Verification:

CREATE TABLE encodings

(

encodeId serial,

employeeid char(8) default '00000000',

employeename varchar,

encodevector cube

);Also create an index on CUBE column to retrieve the data faster, as follows:

CREATE INDEX encodevector_idx ON encodings(encodevector);Great!!!, we are done with database part, lets create Python function to call from registration and verification code:

Import the required packages:

from psycopg2.pool import SimpleConnectionPool

from contextlib import contextmanagerDefine the database and connection parameters:

hostname = 'localhost'

dbname = 'facedb'

dbuser = 'face'

dbpass = 'face'

db = SimpleConnectionPool(1, 10,host=hostname,database=dbname,user=dbuser,

password=dbpass)

@contextmanager

def get_connection():

con = db.getconn()

try:

yield con

finally:

db.putconn(con)Define the function to INSERT encodings, name to PostgreSQL table:

def saveDb(employeeId, name, face_encodings):

with get_connection() as conn:

try:

cursor = conn.cursor()

query = "INSERT INTO encodings(employeeid, employeename, encodevector) VALUES ('{}','{}', CUBE(array[{}]))".format(employeeId, name, ','.join(str(s) for s in face_encodings))

cursor.execute(query)

cursor.close()

conn.commit()

return 1

except:

conn.rollback()

return 0

Function to compare the encoding with existing encodings from table and returns the corresponding name and employee ID

def findDb(face_encodings, threshold=0.4):

with get_connection() as conn:

try:

name = 'Unknown'

empid = '0000000'

cursor = conn.cursor()

query = "SELECT employeename, employeeid FROM encodings WHERE sqrt(power(CUBE(array[{}]) <-> encodevector, 2)) <= {} ".format(','.join(str(s) for s in face_encodings), threshold) + "ORDER BY sqrt(power(CUBE(array[{}]) <-> encodevector, 2)) ASC LIMIT 1".format(','.join(str(s) for s in face_encodings))

cursor.execute(query)

data = cursor.fetchone()

if data == None:

return name, empid

else:

return data

except Exception as e:

return eI hope this article is helpful to you. Please write in the comment section, if you are facing any challenges with this implementation. I will be glad to assist you.

Wow, Good Thought You Are Sharing With Us Great Post And Thought We Need To Work On Also And managing The All-Round Software Solutions

ReplyDeleteIs this possible for MS SQL server?

ReplyDeleteNot sure, why name was showing as unknown - let me know, if it works for you, thanks.

DeleteRathish Kumar B

Hi!

ReplyDeleteGreat solutions! I work a lot with postgres, first time with face recognition data.

I Try to analyze select query and its not using index scan, instead uses seq scan.. Can You help to create correct index?

Hi,

ReplyDeletei am following your project in C#, but stuck in sql text can you please post a sample of format for insert encoding, this will help lot

Hello, thanks for the tutorial. I am trying to do the same, however I will use appropriate k-means since it is faster. Is it possible you can show the implementation done in C/C++? thanks.

ReplyDeletecursor.execute(query)

ReplyDeletepsycopg2.errors.SyntaxError: syntax error at or near "0.13080576"

LINE 1: ...('FG1M_UOVUAI2JFY0.jpg', CUBE(array[[ 0.06245649 0.13080576...

cursor.execute(query)

ReplyDeletepsycopg2.errors.SyntaxError: syntax error at or near "0.13080576"

LINE 1: ...('FG1M_UOVUAI2JFY0.jpg', CUBE(array[[ 0.06245649 0.13080576...